Building a Procedural Lotus Pond in Maya: L-Systems, Python, and Pipeline Architecture

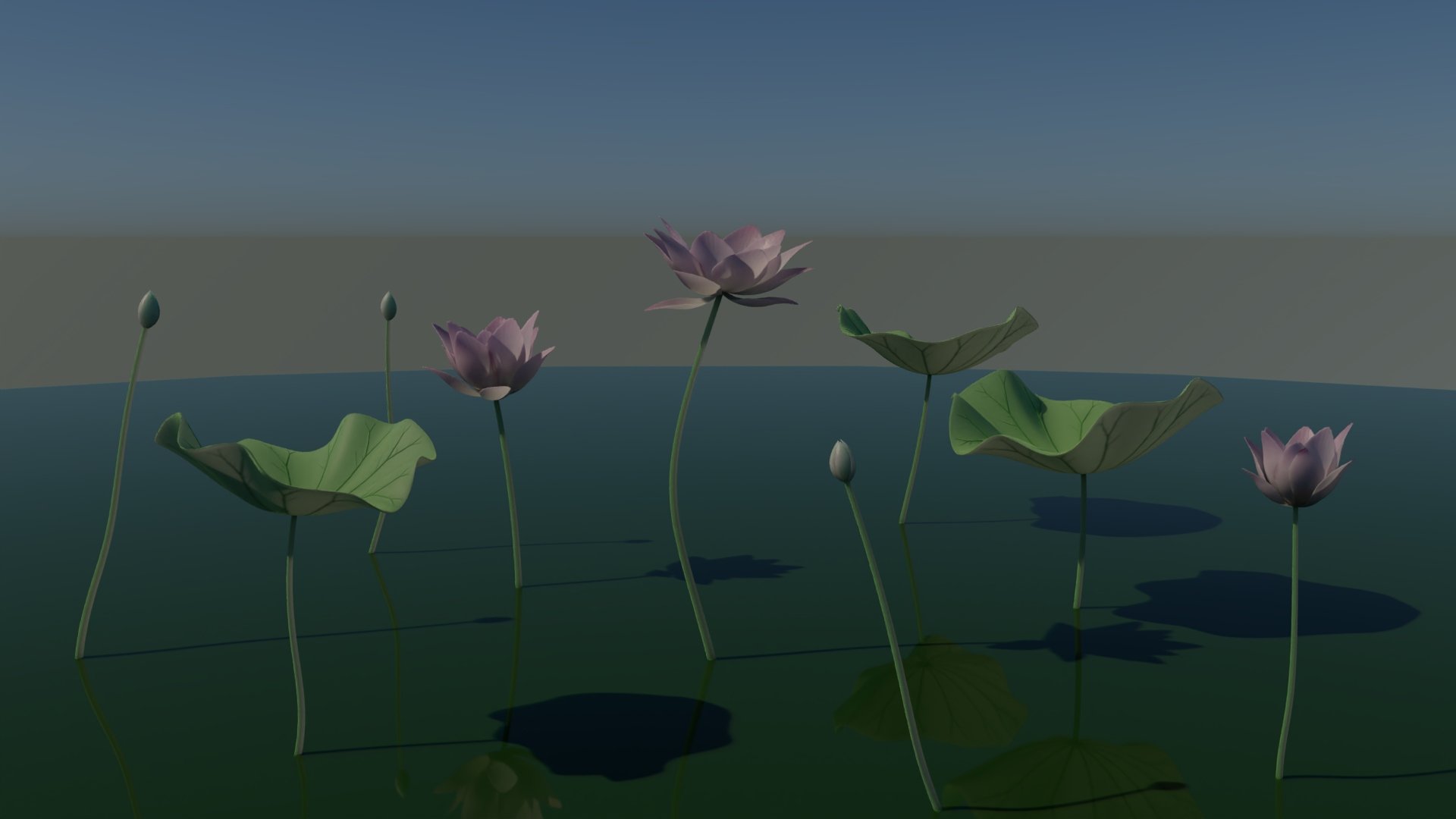

Final Output of the Procedural Lotus Pond Tool

TL;DR

A fully procedural lotus pond generator built in Maya via Python — no manual placement, no repeated assets. The tool ingests a scattered point cloud and populates it with biologically-grounded, L-system-driven flora at real-world scale, with randomized bloom states, organic noise, and collision filtering. Every plant is mathematically unique. The result is a system a layout artist could run in seconds to populate an entire environment — the kind of tool that replaces hours of manual work in a production pipeline. This post breaks down the architecture, the hard problems, and the honest limitations.

Who Is This For

This post is written for recruiters evaluating pipeline and technical art skills, and for fellow students curious about how procedural systems are designed from the ground up — not just what they produce, but why they're built the way they are.

“The goal was never to build a beautiful asset — it was to design a system that could generate thousands of them without breaking.”

01. The Objective: System-Level Set Dressing

The Problem: Manually placing and iterating on a lotus pond is inefficient.

The Solution: A point-based procedural generation tool built in Maya via Python, designed to instantly populate a scattered point cloud with unique, L-system-driven flora.

The Scope: Specifically optimized for medium-to-wide shot environment generation, allowing layout artists to quickly establish dense, organic ecosystems without manual placement

The Standard: Adherence to strict real-world scale for immediate pipeline integration.

02. The Core Architecture (Python & Modularity)

The objective was not merely to model a lotus, but to architect a resilient, point-driven procedural system. Monolithic scripts create pipeline bottlenecks; therefore, the tool’s architecture is strictly modular and designed for headless execution — meaning it runs entirely from a configuration file, with no UI required, making it immediately compatible with batch processing farms and studio pipelines.". It is built to ingest a scattered point cloud, filter for collisions, and output high-fidelity geometry at a strictly adhered real-world scale (1 unit = 1cm).

The Centralized "Blueprint" (Data as Truth)

To ensure maximum scalability and avoid fragile UI-dependent query commands, the system is driven entirely by a master configuration dictionary (lotus_config). This "Blueprint" dictates the parameters for the L-system rules, geometry scaling, and flower proportions. By isolating the configuration data from the execution logic, the tool can easily be hooked into a pipeline database, JSON file, or batch-processing farm without rewriting a single line of core logic.

Decoupled Logic and Algorithmic Data Passing

The generation logic is heavily decoupled into single-responsibility functions, managed by a master assembly function (assemble_lotus_master and assemble_flower_master). Data flows sequentially:

Procedural Evaluation: The L-System logic (generate_lsystem_string) calculates the growth rules and outputs a structured data payload—specifically, a string of generational instructions (e.g., FF[-L]F[R]).

Translation to Cartesian Data: This string is immediately passed to the build_vein_curves function, which mathematically interprets the characters into Cartesian space to generate NURBS curves natively at the target scale.

Geometry Instantiation: The resulting curve data and spatial transforms are then handed to the meshing functions (build_leaf_mesh, build_stem), which generate the final geometry without needing to reference the original L-system logic.

Function Isolation & Pipeline Resilience

In a production environment, tools must fail gracefully. By isolating the components, the system is structurally stress-tested against localized errors. The master assembly function acts as a strict traffic controller. If a specific stem calculation encounters an issue, the error is isolated within its own functional scope, preventing a catastrophic failure of the master scatter loop.

03. Challenge I: Translating Biology into L-Systems

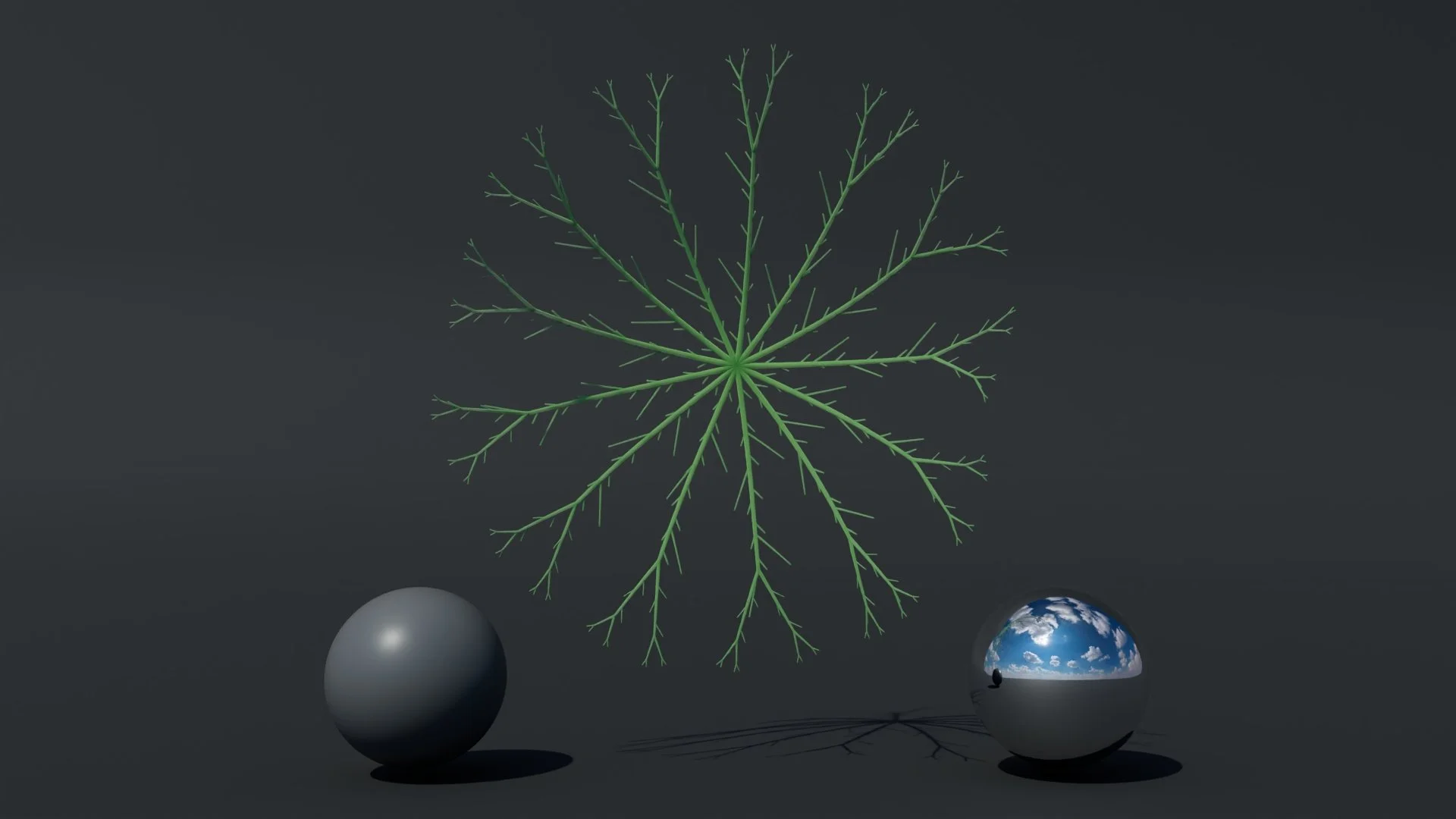

L-system Leaf Vein

The core challenge of generating organic structures in a pipeline is avoiding the "clone effect." You need biological variation grounded in strict mathematical rules. Rather than manually modeling variations or relying on heavy pre-built solvers, this tool utilizes a custom Python-based L-System parser to calculate the branching topology of the lotus veins before a single piece of geometry is instantiated.

The Biological Blueprint The growth pattern is dictated entirely by recursive axiomatic logic stored in the master dictionary: "rules": {"X": "FF[+X][-X]", "F": "FF[-L]F[R]", "L": "[-FFFF]", "R": "[+FFFF]"}

This generates a complex, self-replicating string. However, a raw string is useless to Maya until it is translated into physical vectors.

Cartesian Translation & Stack Memory The true architectural hurdle was building the interpreter that converts this string into spatial data. When the parser reads an F (Forward), it calculates a new Cartesian vector using the current heading via sine and cosine. This calculation is multiplied by a micro-randomized step size (rand.uniform(0.95, 1.05)), ensuring that while the macro-structure is uniform, the micro-details of every single vein are mathematically unique.

When the system encounters a branch [, it must remember its exact spatial state to return to after the branch completes. This is handled via a Last-In-First-Out (LIFO) stack memory block. Think of it like a bookmark system: before the algorithm explores a branch, it saves its exact position and heading so it can return to that precise point once the branch is done — the same way you'd fold a page corner before taking a detour.

if char == "F":

# Trigonometric translation modified by target scale and organic noise

curr_step = rand.uniform(0.95, 1.05) * base_step_size

next_x = curr_pos[0] + (curr_step * math.cos(rad))

next_z = curr_pos[2] + (curr_step * math.sin(rad))

next_pos = [next_x, 0, next_z]

# The Structural Constraint

curr_dist = math.sqrt((next_x ** 2) + (next_z ** 2))

if curr_dist < leaf_radius:

current_points.append(next_pos)

# ... [Angle Evaluation Logic] ...

elif char == "[":

# Push exact state to LIFO stack for lateral branching

stack.append((list(curr_pos), curr_angle, list(current_points), depth))

current_points = [curr_pos]

depth += 1

Architectural Boundary Enforcement

Unconstrained L-systems will grow into jagged, unpredictable shapes—a major liability in a production pipeline. The critical line in the code above is the curr_dist < leaf_radius check. By wrapping the trigonometric translation inside a strict radial boundary constraint, the algorithm forces the chaotic procedural growth to terminate perfectly within the circular silhouette of the lotus leaf. The math is allowed to be organic, but it is strictly confined to the system's design.

04. Challenge II: The API/Syntax Bridge (Taming Maya)

Every pipeline TD eventually hits the wall of legacy software architecture. For this project, that wall was Maya’s sweepMesh feature. While incredibly powerful for generating the stems and veins, it is notoriously tricky to handle procedurally. It lacks a straightforward Python wrapper that cleanly returns the generated transform and history nodes, relying instead on active selection states and native MEL execution.

The Problem: Ghost Nodes

To procedurally taper the thickness of the sweeping veins based on their branching depth in the L-system, the script needed absolute control over the sweepMeshCreator node immediately after generation. However, calling the sweep command procedurally often leaves you blind—the geometry is created, but the script doesn't know what it's named or where it is in the hierarchy.

Collaborating with AI (Gemini as a Pair-Programmer)

Rather than spending hours brute-forcing undocumented API quirks or digging through outdated forum threads, I brought the problem to Gemini. Treating the AI as a pair-programmer, we broke the bottleneck down into two specific logical challenges:

How do we cleanly execute the MEL-based sweep command within a headless Python loop?

How do we reliably capture the newly generated geometry and history nodes when the command itself returns None?

The Solution: The "Set Difference" Bridge

Through this collaborative debugging, we engineered a robust bypass. Instead of relying on Maya to tell us what it created, we force the script to deduce it using set mathematics.

This was a genuinely hard problem: Maya's sweep command generates geometry but tells the script nothing about what it just created — no name, no reference, nothing to grab onto. Without a reliable handle on the new geometry, the entire tapering system breaks down. The solution we engineered doesn't rely on Maya to report back at all. Instead, the script takes a snapshot of the scene before and after the command fires, then uses set subtraction to isolate exactly what was just created — with 100% accuracy, regardless of Maya's internal naming conventions. It's a clean bypass of an undocumented API limitation.

# 1. Snapshot the existing scene state

existing_meshes = cmds.ls(type="mesh") or []

# 2. Bridge Python and MEL to execute the sweep

cmds.select(crv, replace=True)

mel.eval('sweepMeshFromCurve -oneNodePerCurve 1;')

# 3. Snapshot the new state and calculate the delta

current_meshes = cmds.ls(type="mesh") or []

new_meshes = list(set(current_meshes) - set(existing_meshes))

# 4. Traverse the isolated mesh's history to find the Sweep node

mesh_shape = new_meshes[0]

raw_history = cmds.listHistory(mesh_shape)

sweep_node = cmds.ls(raw_history, type="sweepMeshCreator")[0]

# 5. Inject procedural tapering based on L-System depth

cmds.setAttr(f"{sweep_node}.scaleProfileX", current_width)

cmds.setAttr(f"{sweep_node}.taper", 1)

By engineering this bridge, the system retains its procedural integrity. The mathematical L-system controls the width and taper variables, while this Python-to-MEL bridge reliably handles the brute-force geometry generation under the hood, completely immune to Maya's internal naming conventions.

05. Controlled Chaos: The Probability Engine

A procedural system built strictly on math will inevitably look artificial. Nature is defined by imperfections. To make the lotus pond feel organic, I had to build a probability engine into the core architecture, layering randomization at the macro, meso, and micro levels, while keeping it strictly bound by art-directable constraints.

Macro: The Ecosystem Scatter

At the top level, the execute_scatter function evaluates every valid point and rolls a 50/50 probability to determine if it will spawn a leaf or a flower. Once decided, it applies a randomized global rotation (0–360 degrees) and a non-uniform scale variance (+/- 5%) to ensure no two assets share the exact same silhouette or footprint.

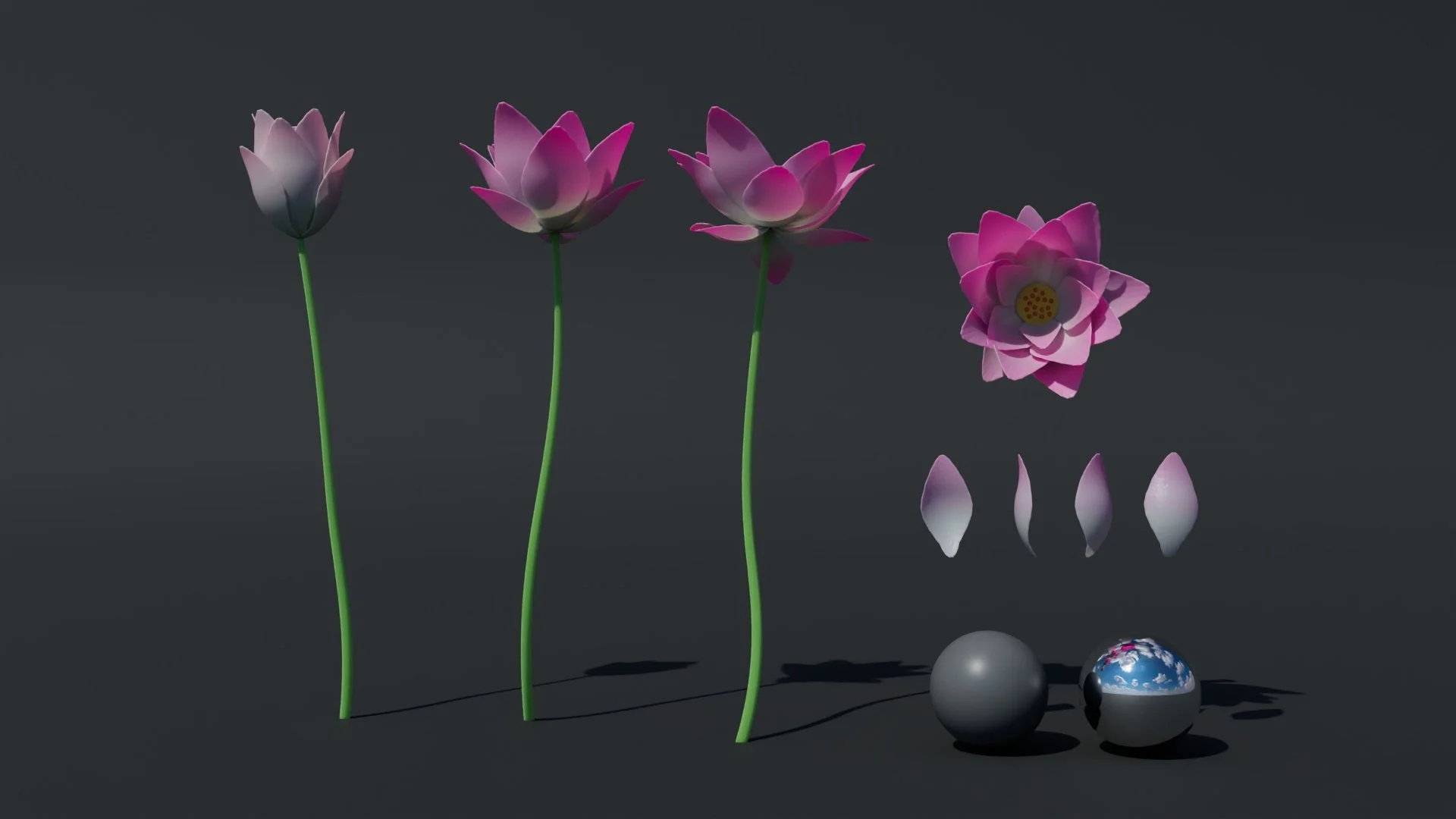

Meso: The Continuous Bloom State

flower blooming state and color controlled by the blooming factor.

The most complex randomization happens in the flower generation. Instead of building separate functions for a "bud," a "half-open flower," and a "full bloom," I designed a continuous probability engine driven by a single bloom_factor float.

First, the system rolls a 30% chance to generate a closed bud (bloom_factor between 0.0 and 0.1). If it passes, it generates an open flower (bloom_factor between 0.6 and 1.2). This single continuous variable is then injected into the shape nodes and read by the Arnold shaders to dynamically adjust the subsurface scattering and petal color saturation based on the flower's exact age. Furthermore, the generator includes a 5% chance to randomly skip a petal during the phyllotaxis spiral loop, simulating natural environmental damage.

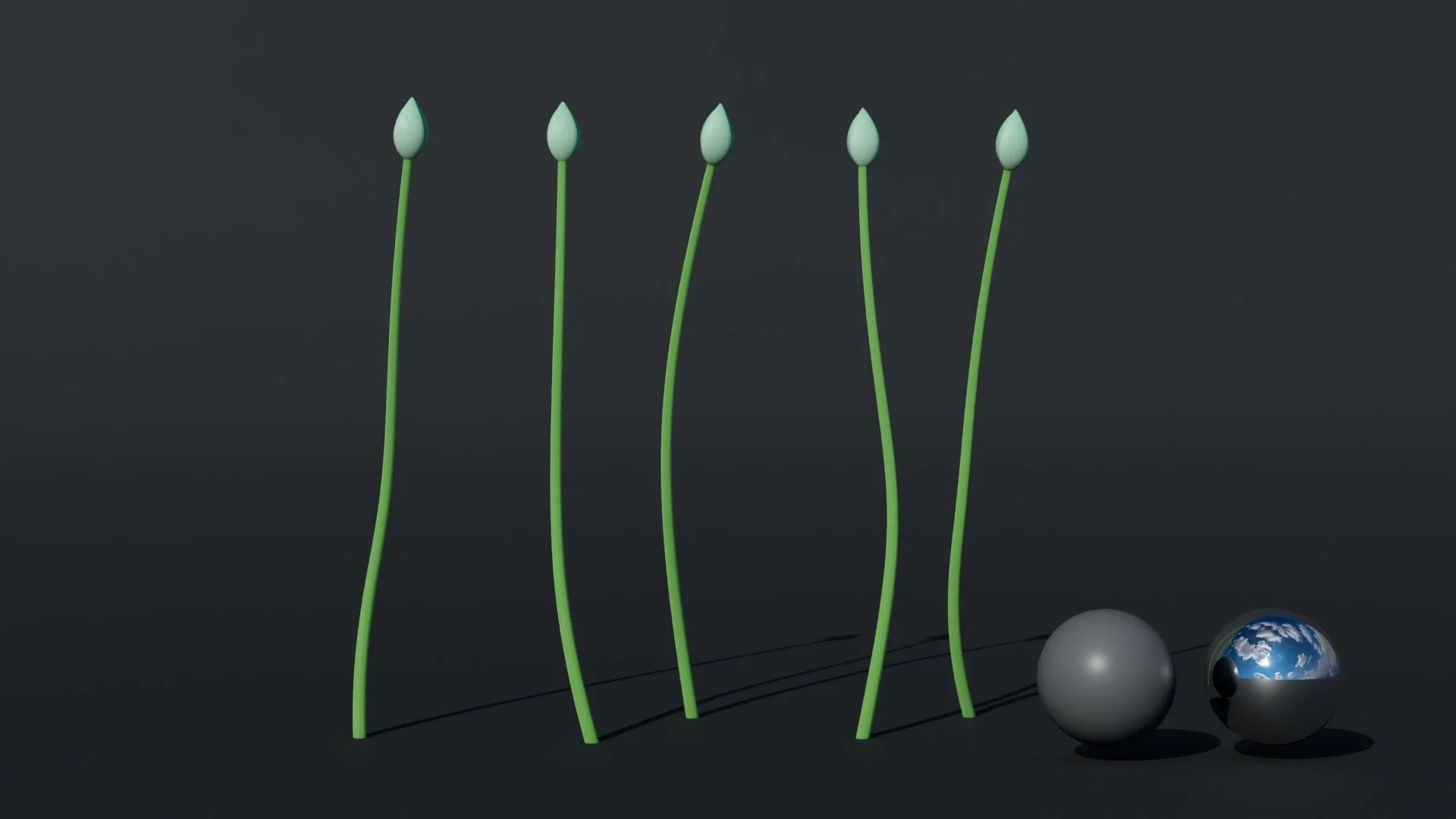

Structural (The Universal Stem & Tangent Alignment)

To keep the architecture efficient, both the leaves and flowers share a single, universal stem generation function. The script plots five points downward, applying an escalating X and Z noise multiplier to the lower points to simulate organic, water-swept growth, while keeping the top connection point strictly locked. To ensure the heavy geometry doesn't look artificially pasted on, the system performs a mathematical snap: it queries the normalized tangent vector at the very top of the generated stem curve (parameter 0.0), calculates the Euler rotation offset, and perfectly aligns the leaf or flower's up-vector to the stem’s exact trajectory.

stem and bud. Stem function is shared across buds, leaves, and flowers.

Micro: Seamless Harmonics and Noise

At the vertex level, pure randomness creates broken geometry. The noise had to be mathematically seamless. For the wavy deformations on the edges of the lotus leaves, I used compound sine and cosine harmonics. To prevent the topology from tearing at the 360-degree seam, the randomized frequencies were strictly cast as integers:

leaf pattern.

# Amplitudes are randomized as floats for varied wave height r_amp1 = amp1 * rand.uniform(0.7, 1.3) # Frequencies MUST be integers to close the 360-degree loop seamlessly r_freq1 = float(rand.randint(6, 8)) r_freq2 = float(rand.randint(8, 12))

This approach ensures every leaf cup has a unique depth and ripple pattern, while remaining structurally flawless.

06. Pipeline Philosophy: Adaptable Always

A common trend in portfolio projects is wrapping scripts in a complex custom UI. For this initial version, I made a deliberate choice to keep the system "headless"—driven entirely by a master Python dictionary (lotus_config).

My goal was to ensure the core logic, mathematical topology, and data flow were bulletproof before building a front-end. By isolating the configuration data from the execution logic, the tool functions as a self-contained engine. That being said, production pipelines are fluid. Because the logic is completely decoupled, wrapping this generator in a PySide2/PyQt interface for layout artists, or hooking it directly into a studio's shot database for batch processing, would be a straightforward and highly adaptable next step depending on a production's specific needs.

07. Known Limitations & Future Iterations

Writing this tool was a massive learning experience, and stress-testing the code highlighted a few critical areas where the architecture must be optimized for a true production environment:

Execution Overhead (The Cost of Uniqueness): Currently, the script executes the entire mathematical growth sequence for every single point, generating a 100% unique topological variation for each plant. While this guarantees incredible fidelity for hero close-ups, the execution overhead is far too heavy for a large-scale environment. A necessary pipeline optimization for medium-to-wide shots would be pre-generating a controlled pool of variations (e.g., 10 unique leaves, 5 unique flowers) and then intelligently duplicating or instancing them across the point cloud. This would drastically decrease runtime while maintaining the illusion of infinite variety.

Engine Upgrades (The Unknowns): When I was analyzing this code with AI to find its weakest links, the system strongly suggested that the next evolution for this tool would be porting the geometry generation to Maya API 2.0 (OpenMaya) for faster execution, or migrating the instancing logic to Bifrost or native MASH networks. I will be completely transparent: I do not currently know how to implement OpenMaya or build Bifrost graphs at that level. However, identifying these industry-standard solutions has given me a precise roadmap for my next phase of technical learning.

Topological Collision: The current point-filtering algorithm utilizes a fast 2D distance check on the XZ plane to prevent overlapping. A future update should involve raycasting to evaluate complex 3D terrain slopes, ensuring plants align correctly with steep riverbanks rather than floating in space.

08. The Modern Workflow: AI as a Pair-Programmer

Finally, I want to touch on how this tool was built. We are in an era where AI is fundamentally changing development workflows, and I actively use LLMs as a collaborative pair-programmer.

I do not use AI to write monolithic scripts for me; I use it to map my blind spots and rapidly stress-test my logic. As mentioned above, AI is exactly what pointed me toward OpenMaya and Bifrost when I asked how a major studio would handle my execution overhead.

When I was struggling to conceptualize the LIFO stack memory for the L-system, or when I needed to untangle the undocumented quirks of Maya's sweepMesh API, I used AI to bounce ideas back and forth. Engineering the "set-difference" method to capture the ghost nodes generated by MEL was a direct result of this collaborative debugging. Treating AI as a sounding board allows me to iterate faster, learn complex math (like the phyllotaxis spiral) more intuitively, and focus my energy on high-level system architecture rather than getting derailed by syntax errors.

If you are curious how I interact with Gemini, please see this link of our dialogue: https://gemini.google.com/share/0cb2e238a48a

09. Conclusion: Systems Over Assets

Ultimately, this procedural lotus generator is less about the final render and more about the underlying architecture. The transition toward becoming a Technical Artist or FX TD requires a fundamental shift in mindset: you have to stop thinking exclusively about how to build a single beautiful asset, and start thinking about how to design a resilient system that can generate thousands of them without breaking.

While there is still plenty of room for optimization, this project solidified my foundational understanding of algorithmic topology, pipeline data flow, and the critical bridge between Python logic and native software APIs.

The goal is never just to write a script; it is to design a workflow. If the system is built with modularity, strict spatial constraints, and a clear mathematical blueprint, the art will naturally follow.

Thank you for reading through this technical breakdown. If you are interested in discussing procedural ecosystems, pipeline architecture, or pipeline tools, feel free to connect with me.